As we move from simply launching AI agents to maintaining them at scale, many teams are finding that the real challenge begins in production. When real-world data and unpredictable user behaviors enter the mix, maintaining quality can feel like a moving target. To help us navigate this, we are thrilled to welcome Richard Young, Director of Partner Solutions Architecture at Arize AI, to the PagerDuty OnTour SF stage.

The partnership between PagerDuty and Arize isn't new; in fact, we’ve been working together for some time to standardize how we monitor our own internal agents. Our teams previously shared how we use Arize for "golden dataset" testing and regression-like frameworks to ensure our AI features—like the Insights, Shift, and SRE Agents—stay reliable as they evolve.

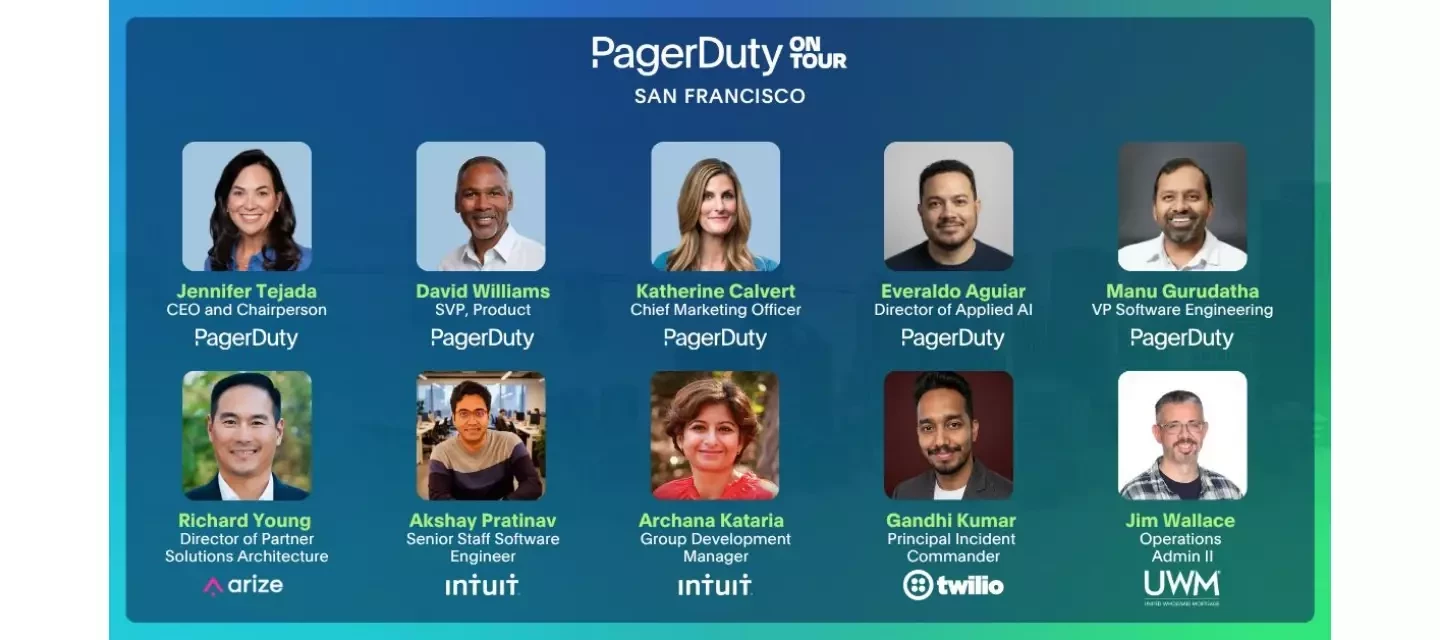

Richard will be joined by PagerDuty’s Everaldo Aguiar for a hands-on session that builds on these foundations. Drawing on insights from over 1 trillion inferences and 10 million monthly evaluation runs, they will share a proven reference architecture for instrumenting traces and evaluating critical user journeys using LLM-judges.

The highlight of the session will be a live demo showing exactly what happens when an agent's correctness falls below an SLO. You’ll see PagerDuty agents coordinate a real-time response to detect, escalate, and resolve quality degradation with the same urgency as a system outage. Whether you are currently shipping agentic AI or planning your next sprint, you’ll walk away with a practical framework you can implement immediately.

We can't wait to see this "signal-to-fix" loop in action on May 13th. Check out the full speaker lineup and let us know what questions you're bringing for the Arize team!